Invoice processing is one of the most common automation scenarios I encounter across organisations. Finance teams are still manually opening PDFs, reading invoice data, and entering values into ERP systems.

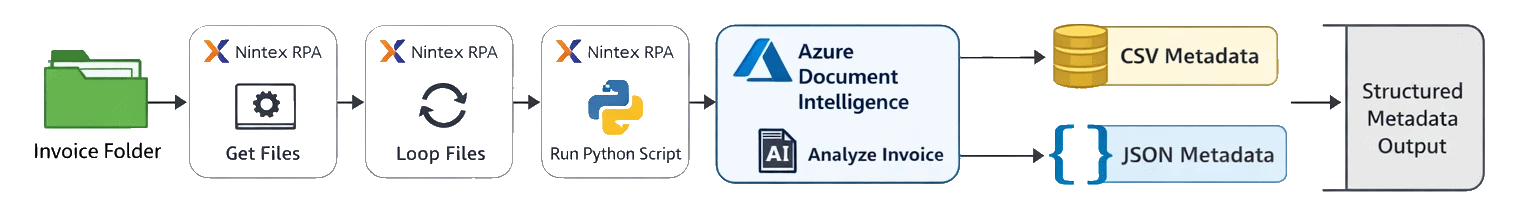

With Nintex RPA, Python, and Azure Document Intelligence, we can build an intelligent document processing (IDP) pipeline that automatically extracts invoice metadata and converts it into structured formats such as CSV and JSON.

The Automation Architecture

The overall automation pattern is straightforward. Nintex orchestrates the workflow while Python integrates with Azure’s AI services.

The goal is to transform an unstructured invoice PDF into structured business data that downstream systems can consume.

Why Azure Document Intelligence?

Azure Document Intelligence provides AI models that can extract structured data from documents without custom training. In my personal experiences with recent POC builds and testing of various other services, I have found the model to be, the most accurate available currently with the least amount of overhead and hassle setting up.

The prebuilt-invoice model automatically detects fields such as:

- Vendor Name

- Invoice Number

- Invoice Date

- Subtotal

- Tax

- Invoice Total

- Billing and shipping addresses

- Line item descriptions

- Line item quantities

- Unit prices

- Line totals

This means we can quickly convert invoice PDFs into structured metadata with minimal code.

CSV or JSON? or Both?

When designing document automation solutions, one of the first architectural decisions is how the extracted metadata should be structured for downstream systems.

Two of the most common formats are CSV and JSON. Each serves a slightly different purpose, and in many real-world implementations the best approach is to support both formats simultaneously.

Let’s break down when each format makes the most sense.

CSV – Best for Tabular Data and Reporting

CSV (Comma Separated Values) is one of the most widely supported data formats in business systems. Because CSV represents data in a flat table structure, it integrates easily with tools such as:

- Excel

- Power BI

- SQL databases

- Finance and ERP import utilities

- Data warehousing pipelines

For invoice processing scenarios, CSV is particularly useful when each invoice line item becomes a row in a table.

This makes CSV ideal for:

- Financial reconciliation

- Analytics and reporting

- Bulk ERP imports

- Spreadsheet-based processing

JSON – Best for Automation and APIs

JSON (JavaScript Object Notation) is designed to represent structured hierarchical data. This makes it particularly well suited for automation platforms and system integrations.

Unlike CSV, JSON can represent relationships such as an invoice containing multiple line items.

JSON is ideal when:

- Sending data to APIs

- Feeding workflow engines

- Storing structured metadata

- Validating documents using schemas

- Integrating with modern applications

Because the data structure is preserved, JSON often becomes the preferred format for automation pipelines and microservices architectures.

| Output Format | Best Used For |

|---|---|

| CSV | Reporting, finance imports, SQL loads, Excel analysis |

| JSON | Workflow automation, API integrations, structured metadata storage |

Best Practice: Support Both

In many enterprise IDP solutions, the most practical approach is to generate both formats from the same extraction process.

A common pattern looks like this:

• JSON feeds automation workflows, APIs, and validation logic

• CSV feeds reporting, finance systems, and analytics tools

By producing both outputs, organisations gain flexibility without needing to reprocess the original documents.

In the automation pattern shown in this article, Azure Document Intelligence extracts the invoice metadata once, and Python simply transforms the results into both CSV and JSON outputs depending on downstream requirements.

This small design choice significantly increases the reusability and scalability of the document processing pipeline.

Sample CSV Output

Each invoice line item becomes a row in the CSV file.

Vendor Name,Invoice Number,Invoice Date,Invoice SubTotal,Invoice Total Tax,Invoice Total,LineItem Description,LineItem Unit Price,LineItem Quantity,LineItem Total

ABC Supplies,INV-1002,2025-02-12,1000,150,1150,Widget A,50,10,500

ABC Supplies,INV-1002,2025-02-12,1000,150,1150,Widget B,25,20,500CSV is particularly useful when importing invoice data into ERP systems or analytics platforms.

Sample JSON Output

JSON preserves the full document structure.

{

"VendorName": "ABC Supplies",

"InvoiceNumber": "INV-1002",

"InvoiceDate": "2025-02-12",

"SubTotal": 1000,

"TotalTax": 150,

"InvoiceTotal": 1150,

"LineItems": [

{

"Description": "Widget A",

"UnitPrice": 50,

"Quantity": 10,

"Total": 500

},

{

"Description": "Widget B",

"UnitPrice": 25,

"Quantity": 20,

"Total": 500

}

]

}JSON is ideal for automation workflows, APIs, and structured data storage.

Python Script – CSV Output

Below is a Python script that extracts invoice metadata and outputs it as a CSV file.

from azure.ai.formrecognizer import DocumentAnalysisClient

from azure.core.credentials import AzureKeyCredential

import sys

import os

import csv

endpoint = "https://YOUR-ENDPOINT.cognitiveservices.azure.com/"

key = "YOUR_API_KEY"

client = DocumentAnalysisClient(endpoint, AzureKeyCredential(key))

invoice_file = None

for arg in sys.argv:

if arg != "," and arg.lower().endswith(".pdf"):

invoice_file = arg

input_path = os.path.join("C:\\WorkSpace\\IDP\\Input", invoice_file)

output_path = os.path.join("C:\\WorkSpace\\IDP\\Output", invoice_file + ".csv")

with open(input_path, "rb") as fd:

receipt = fd.read()

poller = client.begin_analyze_document("prebuilt-invoice", receipt)

result = poller.result()

with open(output_path, "w", newline="", encoding="utf-8") as csvfile:

writer = csv.writer(csvfile)

writer.writerow([

"Vendor Name",

"Invoice Number",

"Invoice Date",

"Invoice SubTotal",

"Invoice Total Tax",

"Invoice Total",

"LineItem Description",

"LineItem Unit Price",

"LineItem Quantity",

"LineItem Total"

])

for invoice in result.documents:

vendor = invoice.fields.get("VendorName")

invoice_id = invoice.fields.get("InvoiceId")

invoice_date = invoice.fields.get("InvoiceDate")

subtotal = invoice.fields.get("SubTotal")

total_tax = invoice.fields.get("TotalTax")

total = invoice.fields.get("InvoiceTotal")

items = invoice.fields.get("Items")

if items:

for item in items.value:

desc = item.value.get("Description")

qty = item.value.get("Quantity")

price = item.value.get("UnitPrice")

amount = item.value.get("Amount")

writer.writerow([

vendor.value if vendor else "",

invoice_id.value if invoice_id else "",

invoice_date.value if invoice_date else "",

subtotal.value if subtotal else "",

total_tax.value if total_tax else "",

total.value if total else "",

desc.value if desc else "",

price.value if price else "",

qty.value if qty else "",

amount.value if amount else ""

])Python Script Example – JSON Output

Below is an example script that produces JSON metadata instead.

from azure.ai.formrecognizer import DocumentAnalysisClient

from azure.core.credentials import AzureKeyCredential

import sys

import os

import json

endpoint = "https://YOUR-ENDPOINT.cognitiveservices.azure.com/"

key = "YOUR_API_KEY"

client = DocumentAnalysisClient(endpoint, AzureKeyCredential(key))

invoice_file = None

for arg in sys.argv:

if arg != "," and arg.lower().endswith(".pdf"):

invoice_file = arg

input_path = os.path.join("C:\\WorkSpace\\IDP\\Input", invoice_file)

output_path = os.path.join("C:\\WorkSpace\\IDP\\Output", invoice_file + ".json")

with open(input_path, "rb") as fd:

receipt = fd.read()

poller = client.begin_analyze_document("prebuilt-invoice", receipt)

result = poller.result()

json_output = []

for invoice in result.documents:

items = []

line_items = invoice.fields.get("Items")

if line_items:

for item in line_items.value:

items.append({

"Description": item.value.get("Description").value if item.value.get("Description") else "",

"UnitPrice": item.value.get("UnitPrice").value if item.value.get("UnitPrice") else "",

"Quantity": item.value.get("Quantity").value if item.value.get("Quantity") else "",

"Total": item.value.get("Amount").value if item.value.get("Amount") else ""

})

json_output.append({

"VendorName": invoice.fields.get("VendorName").value if invoice.fields.get("VendorName") else "",

"InvoiceNumber": invoice.fields.get("InvoiceId").value if invoice.fields.get("InvoiceId") else "",

"InvoiceDate": str(invoice.fields.get("InvoiceDate").value) if invoice.fields.get("InvoiceDate") else "",

"SubTotal": invoice.fields.get("SubTotal").value if invoice.fields.get("SubTotal") else "",

"TotalTax": invoice.fields.get("TotalTax").value if invoice.fields.get("TotalTax") else "",

"InvoiceTotal": invoice.fields.get("InvoiceTotal").value if invoice.fields.get("InvoiceTotal") else "",

"LineItems": items

})

with open(output_path, "w", encoding="utf-8") as f:

json.dump(json_output, f, indent=4)In conclusion

Combining Nintex RPA orchestration, Python scripting, and Azure Document Intelligence provides a powerful way to introduce AI-driven document automation without complex infrastructure.

This approach enables organisations to:

- Process invoices automatically

- Extract structured metadata from documents

- Feed downstream automation workflows

- Integrate with enterprise systems

By supporting both CSV and JSON outputs, teams gain flexibility to support both analytics and automation use cases.

For organisations already using Nintex, this pattern provides a simple and scalable path toward modern intelligent document processing.